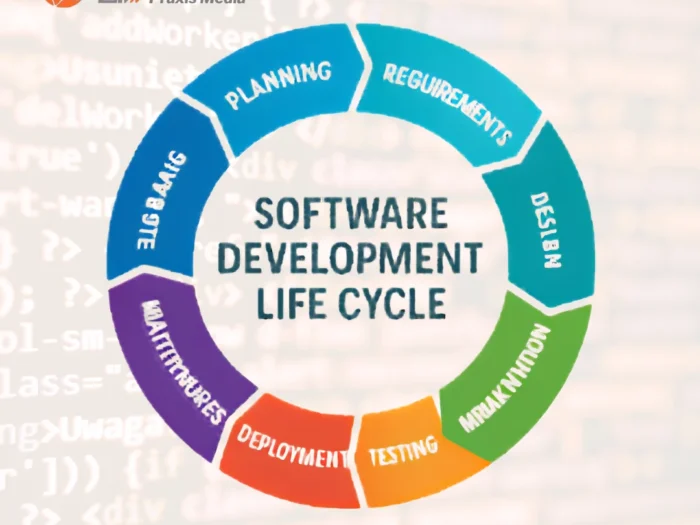

As AI-assisted coding tools continue to evolve, developers and enterprises are increasingly concerned about performance, efficiency, and scalability. One such tool gaining traction is FauxPilot, an open-source alternative designed to replicate code-generation capabilities locally. But a critical technical question arises: can FauxPilot handle scaling across multiple GPUs effectively in real-world deployment scenarios? This is especially relevant for organizations managing large-scale development workflows or running high-throughput inference pipelines.

FauxPilot’s Architecture

FauxPilot is built to function as a self-hosted AI coding assistant, leveraging transformer-based language models similar to those used in commercial copilots. It typically runs on top of frameworks like PyTorch or Hugging Face Transformers, which inherently support GPU acceleration. However, when discussing scaling across multiple GPUs, the conversation shifts from basic deployment to distributed computing architecture.

At its core, FauxPilot is not inherently designed as a distributed system out of the box. Instead, it relies on the underlying deep learning frameworks to manage GPU utilization. This means that while it can run on a single GPU efficiently, extending its performance across multiple GPUs requires additional configuration and infrastructure planning.

Single GPU vs Multi-GPU Performance

In a single GPU setup, FauxPilot performs adequately for individual developers or small teams. Latency is manageable, and inference speeds are relatively fast depending on the model size. However, when workloads increase, such as handling multiple concurrent requests, the limitations become evident. This is where scaling across multiple GPUs becomes a necessity rather than an option.

Multi-GPU setups allow parallel processing of requests, enabling higher throughput and reduced response times. For example, using NVIDIA GPUs like the A100 (which can cost around $10,000–$15,000 per unit) significantly boosts performance. However, without proper orchestration, simply adding more GPUs does not guarantee linear performance gains.

Techniques for Multi-GPU Scaling

To achieve effective scaling across multiple GPUs, FauxPilot deployments typically rely on distributed inference techniques such as:

- Data Parallelism: Splitting incoming requests across multiple GPUs to process them simultaneously.

- Model Parallelism: Dividing the model itself across GPUs when it is too large for a single device.

- Pipeline Parallelism: Breaking down the model into stages, each handled by different GPUs.

Frameworks like PyTorch Distributed or Deep Speed can be integrated to facilitate these approaches. However, implementing these solutions requires advanced expertise in distributed systems and GPU orchestration.

Infrastructure Requirements and Costs

Scaling FauxPilot across multiple GPUs is not just a software challenge, it’s also a financial consideration. The cost of scaling across multiple GPUs includes:

- Hardware Costs: High-performance GPUs range from $1,000 (mid-tier) to $15,000+ (enterprise-grade).

- Cloud Costs: Renting GPUs on platforms like AWS or Google Cloud can cost between $0.90 to $32 per hour depending on the instance type.

- Networking: High-speed interconnects such as NVLink or InfiniBand are often required for efficient GPU communication.

- Maintenance: Ongoing costs for cooling, power, and system administration.

For small businesses, these costs can quickly escalate, making it essential to evaluate ROI before scaling.

Challenges in Multi-GPU Deployment

Despite its potential, scaling across multiple GPUs with FauxPilot presents several technical challenges:

- Synchronization Overhead: GPUs must communicate frequently, which can introduce latency.

- Load Balancing: Uneven distribution of tasks can lead to underutilized resources.

- Complex Configuration: Setting up distributed environments requires expertise in Kubernetes, Docker, and GPU drivers.

- Model Optimization: Not all models are optimized for multi-GPU execution, requiring additional tuning.

These challenges highlight the importance of having a robust deployment strategy and experienced engineers.

Practical Use Cases

Organizations that benefit most from scaling across multiple GPUs with FauxPilot include:

- Large Development Teams: Handling simultaneous code generation requests.

- AI Research Labs: Running experiments with large language models.

- Enterprise SaaS Platforms: Offering AI-powered coding assistance to thousands of users.

In these scenarios, multi-GPU scaling ensures consistent performance and user satisfaction.

Optimization Strategies

To maximize the benefits of scaling across multiple GPUs, consider the following strategies:

- Batching Requests: Grouping multiple queries to improve GPU utilization.

- Quantization: Reducing model size to fit more efficiently across GPUs.

- Caching Mechanisms: Storing frequent responses to reduce computation.

- Monitoring Tools: Using tools like Prometheus or Grafana to track performance.

These optimizations can significantly improve efficiency while reducing operational costs.

AI Perspective: Is Bigger Always Better?

Here’s an important AI-related question: Does increasing GPU count always lead to better AI performance, or is optimization more critical than raw hardware?

The answer is nuanced. While scaling across multiple GPUs can enhance throughput, poorly optimized systems may waste resources. In many cases, algorithmic efficiency and model tuning yield better results than simply adding more hardware. This underscores the importance of a balanced approach to AI infrastructure.

Future Outlook

As AI tools like FauxPilot continue to evolve, native support for scaling across multiple GPUs is likely to improve. Emerging technologies such as distributed inference frameworks and specialized AI accelerators will make multi-GPU deployments more accessible. Additionally, advancements in edge computing and hybrid cloud architectures may reduce reliance on expensive centralized GPU clusters.

Conclusion

FauxPilot can indeed support scaling across multiple GPUs, but it requires deliberate configuration, advanced tooling, and significant investment in infrastructure. While the benefits include improved performance and scalability, the challenges and costs must be carefully managed. For organizations looking to implement or optimize such solutions, professional guidance can make a substantial difference.

Clients seeking expert assistance in deploying scalable AI solutions, including FauxPilot and other advanced tools, should reach out to Lead Web Praxis Media Limited for tailored strategies and implementation support.