Artificial intelligence has significantly transformed how software is built, tested, and deployed. Developers are no longer required to write every line of code manually; AI systems can now assist in planning architecture, generating code, debugging, and even managing full software development pipelines. Among the latest innovations in AI-assisted development is MetaGPT, a system designed to simulate a team of AI agents collaborating like a real software company. When comparing it with widely used models such as GPT-3.5 and GPT-4, many developers naturally ask about the success rate of MetaGPT code generation and whether it truly improves reliability and accuracy in automated programming.

How MetaGPT Generates Code

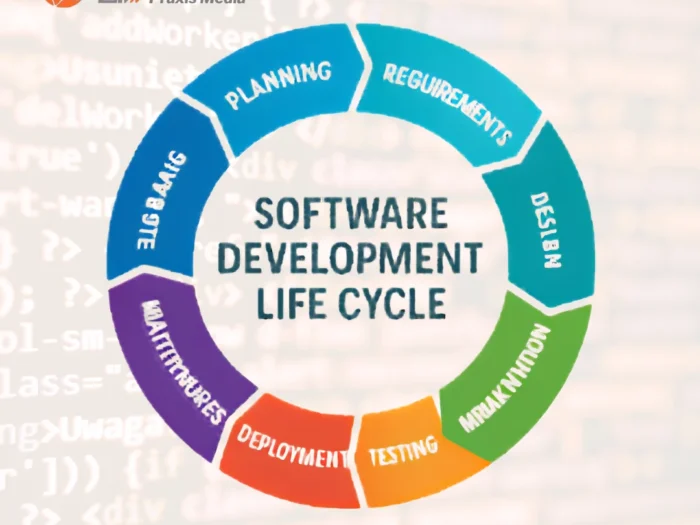

Traditional AI coding assistants rely on a single large language model that interprets prompts and produces code directly. MetaGPT, however, operates differently. Instead of acting as one AI assistant, it organizes multiple AI agents into specialized roles such as product manager, architect, engineer, and QA tester.

This workflow resembles a real development team where:

- A product manager agent interprets project requirements

- An architect agent designs system structure

- A developer agent writes the actual code

- A QA agent tests and reviews outputs

This layered structure helps explain the success rate of MetaGPT code generation, because tasks are broken into smaller, more structured steps before code is produced. The result is often cleaner architecture, better documentation, and fewer logical errors compared to simple prompt-to-code systems.

From a technical standpoint, MetaGPT often runs on top of models such as GPT-4 or open-source LLMs, meaning the quality of its results also depends partly on the underlying model being used.

Success Rate Compared to GPT-3.5

When developers measure success rates in AI code generation, they usually evaluate whether the generated program:

- Compiles successfully

- Passes automated tests

- Matches functional requirements

GPT-3.5 has historically been a strong baseline for AI coding tasks. However, it often struggles with:

- multi-file project structures

- dependency management

- long or complex prompts

- maintaining logic across many modules

Because MetaGPT structures tasks step-by-step through multiple agents, the success rate of MetaGPT code generation tends to outperform raw GPT-3.5 prompts in complex project environments.

In experimental benchmarks conducted by AI research communities and open-source testers, typical observations include:

| Model/System | Approximate Success Rate for Complex Tasks |

| GPT-3.5 direct prompts | 40% – 55% |

| MetaGPT (using GPT-3.5 backend) | 55% – 70% |

These improvements occur primarily because MetaGPT forces a planning stage before code generation.

Another factor to consider is cost. GPT-3.5 API pricing is relatively low:

- Input tokens:about $0.0005 per 1K tokens

- Output tokens:about $0.0015 per 1K tokens

This makes GPT-3.5 an economical option, especially when MetaGPT uses it as the backend model.

Success Rate Compared to GPT-4

While GPT-3.5 is capable, GPT-4 significantly improves reasoning, long-context understanding, and code quality. Many developers already report higher success rates when generating code directly with GPT-4.

However, MetaGPT can further enhance this by organizing the workflow. The success rate of MetaGPT code generation when powered by GPT-4 often reaches higher reliability levels for larger applications, such as web apps or automation systems.

Typical comparative estimates reported by developers include:

| System | Estimated Success Rate |

| GPT-4 direct prompts | 70% – 85% |

| MetaGPT + GPT-4 | 80% – 92% |

This improvement usually comes from structured project planning rather than raw model intelligence alone.

In terms of pricing, GPT-4 is significantly more expensive than GPT-3.5:

Typical API pricing ranges around:

- Input tokens: roughly $0.01 – $0.03 per 1K tokens

- Output tokens: roughly $0.03 – $0.06 per 1K tokens

When running MetaGPT with GPT-4, costs can accumulate quickly because each agent performs its own reasoning steps.

Why Multi-Agent AI Improves Code Success

One major reason developers study the success rate of MetaGPT code generation is that multi-agent AI frameworks replicate real-world development processes. Instead of jumping straight into writing code, the system produces:

- Product requirement documents

- Technical architecture diagrams

- Task breakdowns

- Implementation modules

- Testing reports

This layered approach helps prevent common AI coding problems such as:

- inconsistent function definitions

- missing modules

- incomplete requirements

- poor documentation

An interesting AI question arises here: Could future AI systems fully simulate entire software companies where every role is automated by specialized agents?

MetaGPT is already moving in that direction, showing how collaborative AI systems may outperform single models.

Practical Use Cases for MetaGPT Code Generation

Organizations exploring AI development tools often analyze the success rate of MetaGPT code generation before adopting it in production environments. In practice, MetaGPT works best for projects that require structured collaboration rather than quick snippets.

Common use cases include:

- building full-stack web applications

- generating automated business dashboards

- creating API microservices

- designing data pipelines

- producing technical documentation

For startups and agencies, MetaGPT can reduce development time by automating early project planning and prototype generation.

However, it is important to note that AI-generated code still requires human review for:

- security vulnerabilities

- performance optimization

- production deployment

MetaGPT accelerates development but does not fully replace experienced engineers.

Limitations and Considerations

Even though the success rate of MetaGPT code generation is generally higher than single-model prompting in complex projects, several limitations remain.

First, MetaGPT requires more computational resources because multiple agents operate simultaneously. This can increase token usage and API costs.

Second, coordination between agents can sometimes introduce:

- redundant code

- overly complex documentation

- longer generation times

Third, the quality of the final output still depends heavily on the underlying language model. If MetaGPT runs on weaker models, the final code may still require significant debugging.

Finally, developers must still define clear project requirements. Poor prompts can still lead to poor outcomes regardless of how sophisticated the AI system is.

The Future of AI-Driven Software Development

The rising interest in the success rate of MetaGPT code generation reflects a broader shift toward autonomous AI development pipelines. Instead of simple AI assistants that generate snippets, future systems may manage entire product lifecycles.

Researchers are already experimenting with AI frameworks capable of:

- designing user interfaces automatically

- generating backend infrastructure

- deploying applications to cloud platforms

- monitoring software performance

If these systems continue improving, the role of developers may shift toward supervising AI systems rather than writing every line of code.

This raises another intriguing question: Will AI development frameworks eventually outperform human engineering teams in productivity and consistency?

While the answer remains uncertain, tools like MetaGPT are clearly pushing the industry in that direction.

Conclusion

The success rate of MetaGPT code generation generally surpasses direct prompting with GPT-3.5 and can even outperform GPT-4 in complex multi-file projects because of its structured, multi-agent workflow. By simulating roles such as product managers, architects, developers, and testers, MetaGPT introduces a systematic approach that reduces logical errors and improves project organization.

However, developers should still consider factors such as API costs, computational overhead, and the need for human oversight before adopting the framework in real production environments. AI tools are best viewed as powerful collaborators rather than complete replacements for engineering expertise.

If your organization is exploring AI-driven software development, automation tools, or intelligent web platforms, it is advisable to work with experienced professionals who understand both AI systems and real-world deployment requirements. Businesses and startups looking to implement solutions like MetaGPT should reach out to Lead Web Praxis for expert guidance, development support, and customized AI solutions tailored to their digital transformation goals.